If you want to get high rank in search engines then you have to provide robots.txt file in your website.

If your website doesn’t having the robots.txt file then learn how to create that robots.txt file for your website and make sure that it doesn’t have any errors.

What is robots.txt?

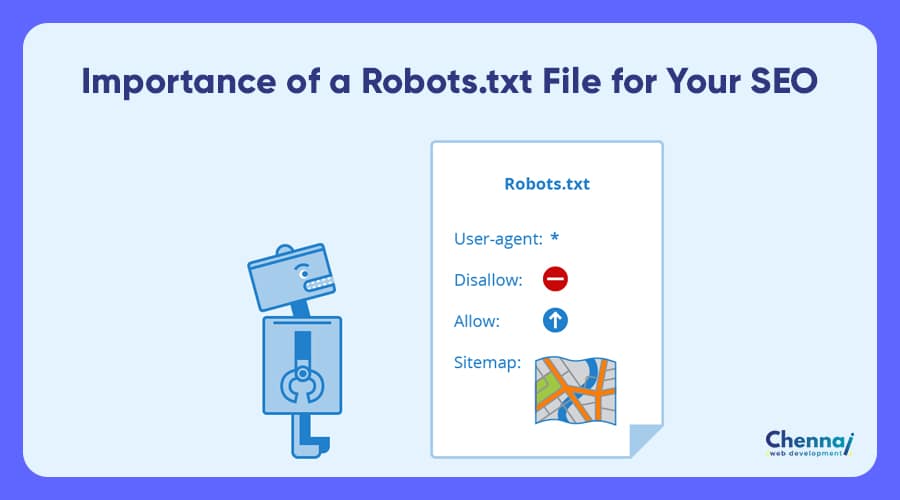

When search engine crawler comes to your site, it checks whether the robots.txt file present or not. These robots.txt file tells search engine to which web page of your site should be indexed and which web page should be ignored by the search engines.

Robots.txt is a simple text file that should be presenting on your root directory of your website. Example is that:

http://domain.com/robots.txt

How do I create a robots.txt file?

This robot.txt is a simple text file. So you need to create simple text file in the editor and the file consist as called as a “records”.

Record consist the information for the search engine. Each record has two fields, that are user agent and one or more disallow lines. The example is

User-agent: googlebot

Disallow: /cgi-bin/

The above code allows the “Googlebot”, which is the search engine spider of Google, and to retrieve every page and except from the cgi-bin directory. So that all files in the cgi-bin directory will be ignored by googlebot. These disallow command works like a wildcard for user agent.

If you leaving disallow is blank you can allow the search engine to index all the pages. In any case, you must enter disallow line for every user-agent record.

If you want to provide same rights to all search engines then you may use the following robots.txt content in your directory

User-agent: *

Disallow: /cgi-bin/

Where you can find the user agent names?

You can find user agent names in your log files by checking the request to robots.txt. Mostly all search engines should be given the same rights that time you should use “user-agent: *”

Things you should avoid in the robots.txt

You should format your robots.txt file properly; otherwise some of your webpage couldn’t index properly. The following are list of things you should avoid:

–> Don’t use comments in the file of robots.txt

–> Always avoid using whitespace at beginning of the command

–> Don’t interchange the order of the commands.

–> Don’t use more than one directory in the line of Disallow statement

–> Be sure you entered the correct file name in the index.txt file. Because letters are case sensitive.

–> Don’t allow any “Allow” command.

- Top 5 Google Ads Business Growth Plan in 1 Month - April 23, 2021

- Why Your Fashion Brand Needs Professional Website Design? - April 5, 2021

- #14 Powerful Digital Marketing Trends for businesses in 2021 - March 17, 2021